Most ad budgets don’t disappear all at once. They bleed out slowly, one underperforming creative at a time. You launch a campaign, wait two weeks, and then realize the version you went with was the wrong one. Ad testing exists so that doesn’t happen.

At Denote, we work with marketers and e-commerce teams who run ads across Facebook, TikTok, and Instagram every day. We’ve seen what happens when teams skip testing (wasted budget, frustrated stakeholders) and what happens when they do it right (lower CPAs, faster iteration, creatives that actually convert). This guide is built on that experience, not theory.

If you’re a small team or a solo marketer trying to make smarter decisions before you spend, this is for you.

Who We Are & Why We Tested This

We built Denote as an ad library and creative intelligence tool, which means we spend a lot of time inside ad data. Our team looks at thousands of creatives every week: what’s running, how long it’s been running, and what signals suggest it’s working.

That vantage point taught us something early on: the ads that perform best aren’t necessarily the most creative ones. They’re the ones that were validated before launch.

We started documenting our own ad testing process about 18 months ago, after a campaign we felt confident about flatlined in week one. The creative looked great internally. The brief was solid. But it missed, and we had no pre-launch data to tell us why.

That was the turning point. We rebuilt our testing workflow from scratch, and this guide reflects what we learned.

What Is Ad Testing? (And What It’s Not)

Ad testing is the process of evaluating how your ad creative is likely to perform before you put real budget behind it. You’re not guessing which version is better, you’re collecting evidence.

The Real Definition Beyond the Textbook

Most definitions make ad testing sound like a formal research exercise. In practice, it’s just a structured way of answering: will this ad connect with the people I’m trying to reach?

It looks at things like:

- Does the hook grab attention in the first 2–3 seconds?

- Is the message clear enough that someone scrolling fast will get it?

- Does the call-to-action feel natural or forced?

- Will people remember the brand after seeing it?

Pre-Launch vs Post-Launch Testing: What’s the Difference?

Pre-launch testing happens before you spend a dollar on media. You’re using surveys, panels, predictive tools, or competitor analysis to stress-test the creative.

Post-launch testing (like A/B testing in-platform) uses real audience data, but it costs money to run and takes time to generate results.

The best teams do both, but if you’re resource-constrained, pre-launch testing is where the leverage is. You catch problems before they cost you.

What Ad Testing Won’t Tell You

This is worth saying upfront: ad testing is not a guarantee. It reduces risk; it doesn’t eliminate it. A creative that tests well can still underperform in the wild due to audience fatigue, poor targeting, or a bad placement. Testing gives you better odds, not certainty.

How We Chose Our Ad Testing Criteria

Before we started comparing methods, we defined what “good” looked like for our team. These were the four filters we used, and we’d suggest any small team go through the same exercise.

Speed: How Fast Can You Get Actionable Insights?

We were running campaigns on short lead times. A testing method that took 3 weeks to return results wasn’t useful. We needed something that could fit into a 48–72 hour creative review cycle.

Accuracy: Does It Reflect Real Audience Behavior?

We’d been burned by focus groups before, people say they love an ad in a room, then scroll past it in real life. We weighted methods that tied to actual behavioral signals over self-reported opinions.

Cost: Is the ROI Worth It for Your Team Size?

We’re not a 50-person agency. We needed testing that didn’t require a five-figure research budget to run.

Scalability: Can It Handle Multiple Creatives at Once?

When you’re testing 6–8 creative variants for a single campaign, the method needs to scale. One-by-one manual review doesn’t cut it.

Ad Testing Methods We Actually Tried

A/B Testing — What Worked and Where It Failed Us

How We Set It Up

We ran split tests inside Meta Ads Manager, splitting budget evenly between two creative variants targeting the same audience. Standard setup, nothing fancy.

Results After 3 Weeks

The winner was usually obvious by day 10. CTR differences were meaningful (sometimes 40–60% spread between variants), and we could make decisions with reasonable confidence.

Who Should Use A/B Testing

Teams with consistent monthly ad spend ($5K+), enough runway to let tests run, and campaigns that aren’t time-sensitive.

Who Should Skip It

Anyone running a one-time promotion, a launch campaign with a hard deadline, or a brand new audience where you have no baseline data. A/B testing in those scenarios burns budget to tell you something you needed to know before you started.

Surveys & Focus Groups — Slower Than We Expected

What We Tested

We ran two survey-based tests through an online panel, one for a product ad, one for a brand awareness concept. Turnaround was about 5–7 business days.

The Honest Downside: Response Bias

People are nicer in surveys than they are with their thumbs. We got high “purchase intent” scores on an ad that later had a 0.4% CTR. The disconnect was significant enough that we stopped using surveys as a primary validation method.

Best Use Case

Surveys still have a place, specifically for brand messaging work where you’re trying to understand emotional resonance, not predict click behavior. Use them as a complement, not a crutch.

AI-Powered Predictive Testing — The Method We Ended Up Choosing

Why We Switched to This

After two frustrating months of slow feedback cycles, we moved to predictive tools that analyze creative elements, visual attention, contrast, hierarchy, message clarity, without needing to run live media.

What the Data Actually Showed

Our first round of predictive analysis flagged something we’d completely missed: our primary CTA was in a low-attention zone on mobile. We repositioned it, and the revised creative outperformed the original by 34% in CTR on launch.

Time Saved vs Traditional Methods

Old process: brief → design → feedback → revise → survey → revise → launch. About 3 weeks.

New process: brief → design → predictive test → revise → launch. About 4–5 days.

Limitations We Found

Predictive tools are excellent for visual and structural analysis. They’re less useful for copy testing, nuanced emotional resonance, or highly niche audiences. We still run quick copy checks with a small internal panel before anything goes live.

Side-by-Side Comparison of Ad Testing Methods

Method Comparison Table

Which Method Won for Our Team — And Why

We settled on a combination: AI predictive testing pre-launch + A/B testing post-launch for campaigns with longer run times.

For time-sensitive campaigns, predictive testing alone is the call. It gives us enough confidence to launch without burning budget on a test that won’t finish before the campaign ends.

Step-by-Step: How to Run Ad Testing That Actually Works

Step 1 — Define Your Testing Goal Before Touching Any Creative

What are you trying to learn? “Which ad is better” is not a goal. “Which headline drives higher CTR with cold audiences” is.

Anchor every test to one metric. If you try to optimize for CTR, brand recall, and conversion rate simultaneously, you’ll end up with data that contradicts itself.

Step 2 — Build Meaningfully Different Variations (Not Just Color Swaps)

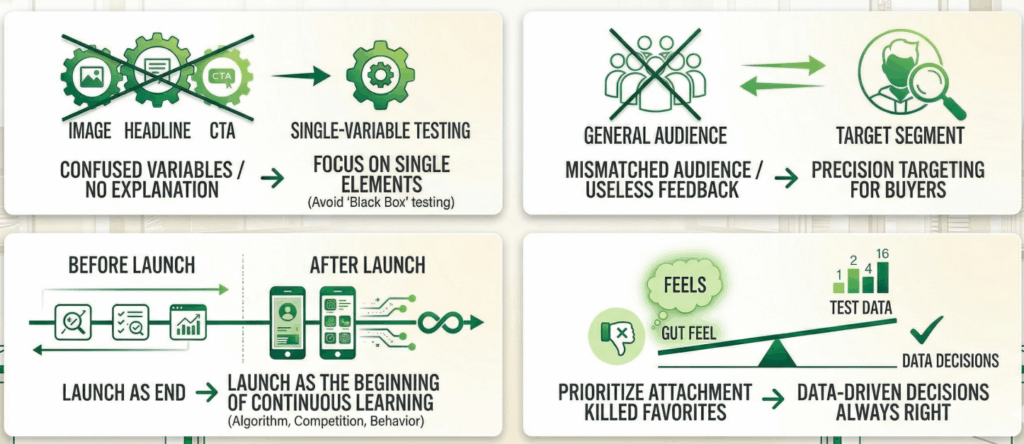

Change one significant element per test: the hook, the offer framing, the visual format, the CTA copy. If you change three things at once, you won’t know what moved the needle.

We typically test 2–3 variants per campaign. More than that and budget gets spread too thin for any variant to generate statistically meaningful data fast enough to matter.

Step 3 — Choose the Right Method for Your Timeline & Budget

Use this as a rough guide:

- Less than 1 week to launch → Predictive AI tool only

- 1–3 weeks, moderate budget → Predictive tool + quick survey check

- Ongoing campaign, higher budget → Live A/B test

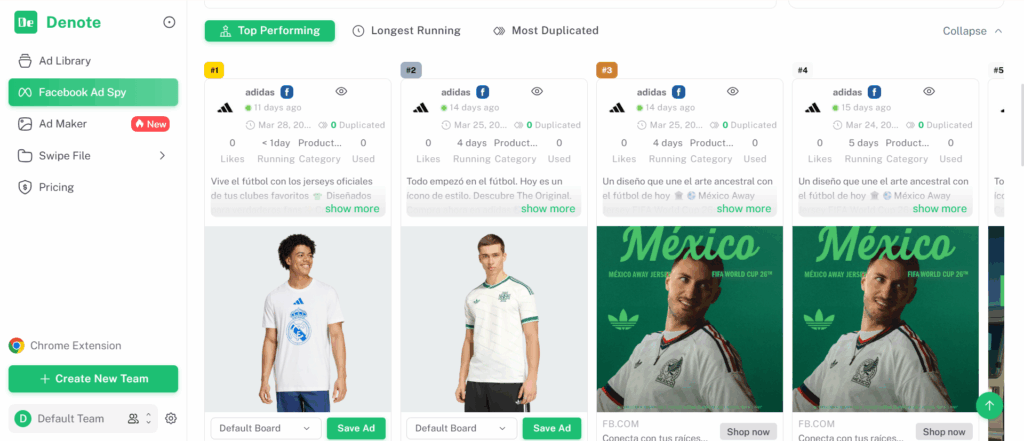

If you want to understand what your competitors are running before you commit to a direction, tools like Denote’s Facebook Ad Spy let you see what’s actively running in your category, useful context before you design your own variants.

Step 4 — Analyze Results Against Your Original Goal

Don’t let secondary metrics distract you. If you tested for CTR, evaluate on CTR. High video views with low CTR means something, but it doesn’t mean your test succeeded.

Document what you found and why you think it happened. That institutional knowledge compounds over time.

Step 5 — Make One Change at a Time, Then Retest

The temptation after a bad test is to change everything. Resist it. Isolate the variable, make the adjustment, and retest. It’s slower in the short term and dramatically faster across a quarter.

Step 6 — Launch with a Post-Live Monitoring Plan

Even the best pre-tested creative needs to be monitored after launch. Set a check-in point at 48 hours and again at 7 days. If performance diverges significantly from what testing predicted, that’s signal worth understanding, not just a problem to fix.

Mistakes We Made (So You Don’t Have To)

Mistake 1 — We Tested Too Many Variables at Once

Early on, we’d change the image, headline, and CTA between variants and then wonder why we couldn’t explain the result. Testing became a black box. We fixed this by limiting changes to one element per test, even when it felt slow.

Mistake 2 — We Used the Wrong Audience Segment

We ran pre-launch surveys with a broad general population panel instead of our actual target audience. The feedback was useless, the people we surveyed weren’t our buyers. Match your test audience to your real audience, always.

Mistake 3 — We Stopped Testing After Launch

We used to treat launch as the finish line. It isn’t. Post-launch data is where you learn what pre-launch tests can’t tell you: how your creative holds up against real competition, real scrolling behavior, and a real algorithm.

Mistake 4 — We Trusted Gut Feel Over Data

The creative that feels best internally is often the one the team is most attached to, not the one that performs. We’ve killed our personal favorites more times than we can count based on test data. It still stings. It’s always the right call.

Ad Testing Tools Worth Considering in 2026

Best for AI-Powered Speed

Tools like Neurons and Dragonfly AI use computer vision to analyze where attention lands in a creative before it ever runs. Good for visual hierarchy checks and pre-launch validation.

Best for Survey-Based Depth

Attest and Kantar offer panel-based testing with demographic targeting. Slower and more expensive, but useful for brand campaigns where emotional resonance matters.

Best for Small Teams on a Budget

If you’re running lean, the most cost-effective starting point is often competitor analysis, understanding what’s already working in your space before you design anything. Denote’s ad library lets you browse and save active ads from Facebook, TikTok, and Instagram, filtered by niche or competitor, useful for benchmarking your creative before you spend on formal testing.

Tool Comparison Table

Who Should NOT Prioritize Ad Testing Right Now

If Your Budget Is Under $1K/Month

At very small scale, the overhead of structured testing outweighs the benefit. Focus on competitor analysis and creative inspiration first, build a baseline of what works, then add formal testing once you have meaningful spend.

If You’re Running One-Off Campaigns

A single promotional campaign with a hard end date doesn’t benefit from A/B testing, you won’t have enough time to collect actionable data. Use predictive tools or gut-check against competitor creative instead.

If You Haven’t Nailed Your Target Audience Yet

Testing creative before you’ve validated your audience is like testing car models before you know what roads you’re driving on. Audience clarity comes first. Creative testing after.

Our Final Verdict After Testing Multiple Methods

What We Use Today and Why

Our current stack: predictive AI testing for pre-launch visual validation, Denote’s ad library for competitor benchmarking during the brief stage, and in-platform A/B testing for campaigns with 3+ week run times. That combination covers pre-launch risk, creative inspiration, and ongoing optimization without requiring a large research budget.

What We’d Do Differently from Day One

We’d start with competitor analysis earlier. Before briefing any creative, spend 20 minutes in an ad library looking at what’s actively running in your category. Ads that have been running for 30+ days are almost certainly performing, that’s the closest thing to a free signal about what works.

The One Metric That Changed How We Think About Ad Testing

Not CTR. Not ROAS. It’s creative lifespan, how long an ad keeps performing before it fatigues. Our best-tested creatives consistently run 2–3x longer before fatigue sets in compared to creatives we launched without pre-testing. That’s the compounding value of doing this right.

Frequently Asked Questions About Ad Testing

How much does ad testing cost?

It ranges widely. Predictive AI tools can cost a few hundred dollars a month. Survey panels range from $500 to several thousand per study. In-platform A/B testing costs whatever you put into ad spend. For most small teams, predictive tools offer the best cost-to-insight ratio.

How long should an ad test run?

For live A/B tests: at least 7 days, ideally 14, and only after you’ve hit statistical significance. For predictive testing: hours. The method determines the timeline, not an arbitrary rule.

What’s the difference between ad testing and A/B testing?

A/B testing is one method within ad testing. Ad testing is the broader practice, it includes surveys, predictive tools, competitor analysis, and live split tests. A/B testing specifically means running two or more variants simultaneously with real traffic.

Can small businesses benefit from ad testing?

Yes, but the right methods matter. Predictive tools and competitor ad analysis are accessible at low cost and don’t require large media budgets. Live A/B testing only makes sense once you have consistent spend. Start light, build the habit, scale the process.

Ad testing isn’t about being perfect before you launch. It’s about being less wrong, and improving faster because of it.